California-based startup Subtle Computing is revolutionizing voice technology with its innovative voice isolation models, designed to enhance computer understanding in noisy environments. This advancement addresses a critical need in the rapidly growing voice AI sector, providing significant benefits for applications and services that rely on voice recognition.

The demand for voice AI applications has surged, with companies like Granola, Fireflies, Fathom, and Read AI gaining traction among users and investors. Numerous established firms, including OpenAI, ClickUp, and Notion, have integrated advanced voice transcription capabilities, while emerging developers like Wispr Flow and Willow are enhancing voice dictation features. Additionally, hardware innovators such as Plaud and Sandbar are facilitating voice transcription through specialized devices, transforming user interactions through AI-driven insights.

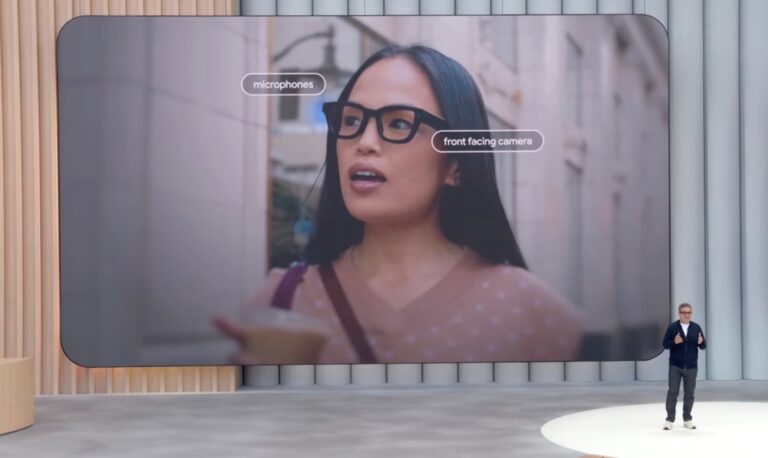

A persistent challenge for these technologies is accurately capturing voice input amid distracting sounds common in coffee shops and bustling offices. To combat this issue, Subtle Computing has developed an end-to-end voice isolation model that effectively transcribes spoken words even in cacophonous settings. According to co-founder Tyler Chen, many existing solutions send voice data to the cloud for processing, an approach that can be inefficient. Instead, Subtle Computing’s model is tailored to the acoustic properties of specific devices and adapts to individual user voices, resulting in superior performance.

Chen emphasizes, “By maintaining the acoustic characteristics unique to each device, we achieve a significant performance boost compared to generic alternatives. This approach allows us to deliver personalized solutions tailored to individual users.”

Founded by Chen, David Harrison, Savannah Cofer, and Jackie Yang — all meeting during their time at Stanford — Subtle Computing originated from a Lean Launchpad course focused on alternative computing interfaces. As voice interaction with AI becomes commonplace, Chen highlights the pressing need for devices to accurately understand users in varied environments, whether in loud public spaces or shared offices.

The company reports that their voice isolation model can operate efficiently on devices with limited processing power, requiring only a few megabytes of storage and achieving latency as low as 100 milliseconds. This efficiency enhances the accuracy of their transcription model, which benefits from improved voice recognition capabilities.

Subtle Computing has gained recognition as part of Qualcomm’s voice and music extension program, ensuring its technology will be compatible with Qualcomm’s chips in products from original equipment manufacturers (OEMs).

The startup recently secured $6 million in seed funding, led by Entrada Ventures, with contributions from Amplify Partners, Abstract Ventures, and notable angel investors including Biz Stone from Twitter, Evan Sharp from Pinterest, and Johnny Ho from Perplexity. Karen Roter Davis, Managing Partner at Entrada Ventures, acknowledged the competitive nature of voice AI but believes Subtle Computing’s focus on voice isolation presents a fresh perspective for market advancement.

Davis commented, “As interactions through voice interfaces increase, the overall experience needs enhancement. Subtle Computing is tackling this challenge head-on, developing reliable solutions for extreme noise and quiet settings, making it a potential game changer in the field.”

Looking ahead, Subtle Computing has hinted at partnerships with a consumer hardware brand and an automotive company to deploy its solutions, although details remain under wraps. The company also plans to reveal its first consumer product, combining hardware and software, in the coming year.